Single Decision Authority

Every agent action clears one gate. No parallel paths. No implicit permission by silence.

Governance for AI that acts. Operational control with evidence you can defend.

If AI can access regulated data, call tools, trigger workflows, modify systems, or issue outputs teams treat as decisions — the problem is not model quality. It is governed execution.

If your AI can act, you need evidence — not reconstruction.

AI systems now read internal data, call tools, change system state, and emit outputs organizations treat as operational decisions. Evidence trails are often thin. Authority is often vague. When scrutiny arrives, everyone becomes a historian.

A fixed-scope diagnostic for agentic systems, connected tools, and AI-enabled workflows. Fixed fee. Executive-ready findings.

Control fails when it is written after the fact. Evidence has to be created at the moment action occurs.

Every agent action clears one gate. No parallel paths. No implicit permission by silence.

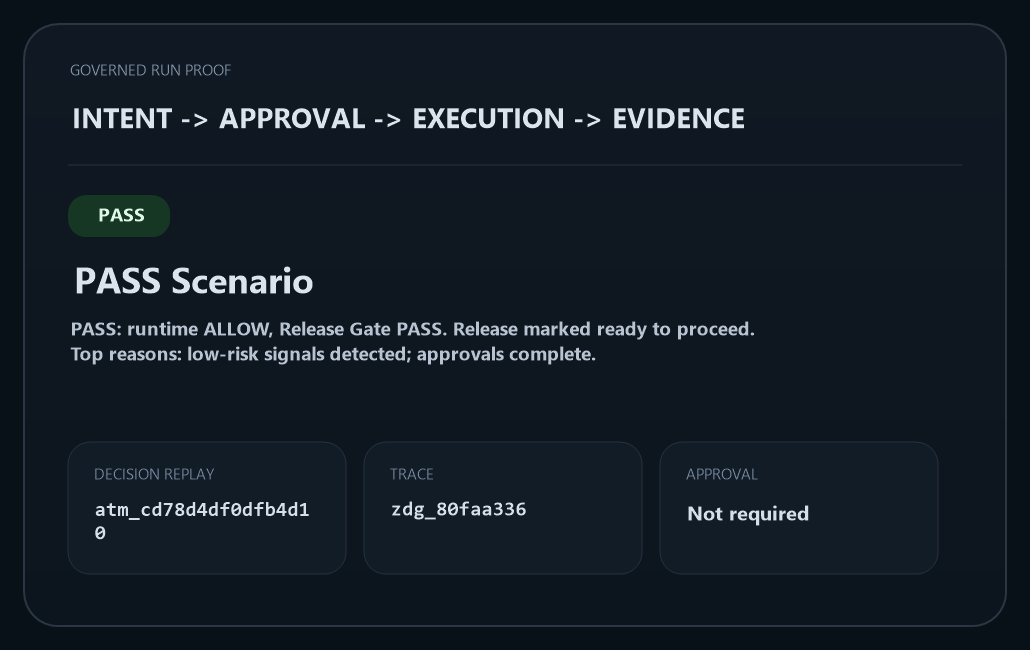

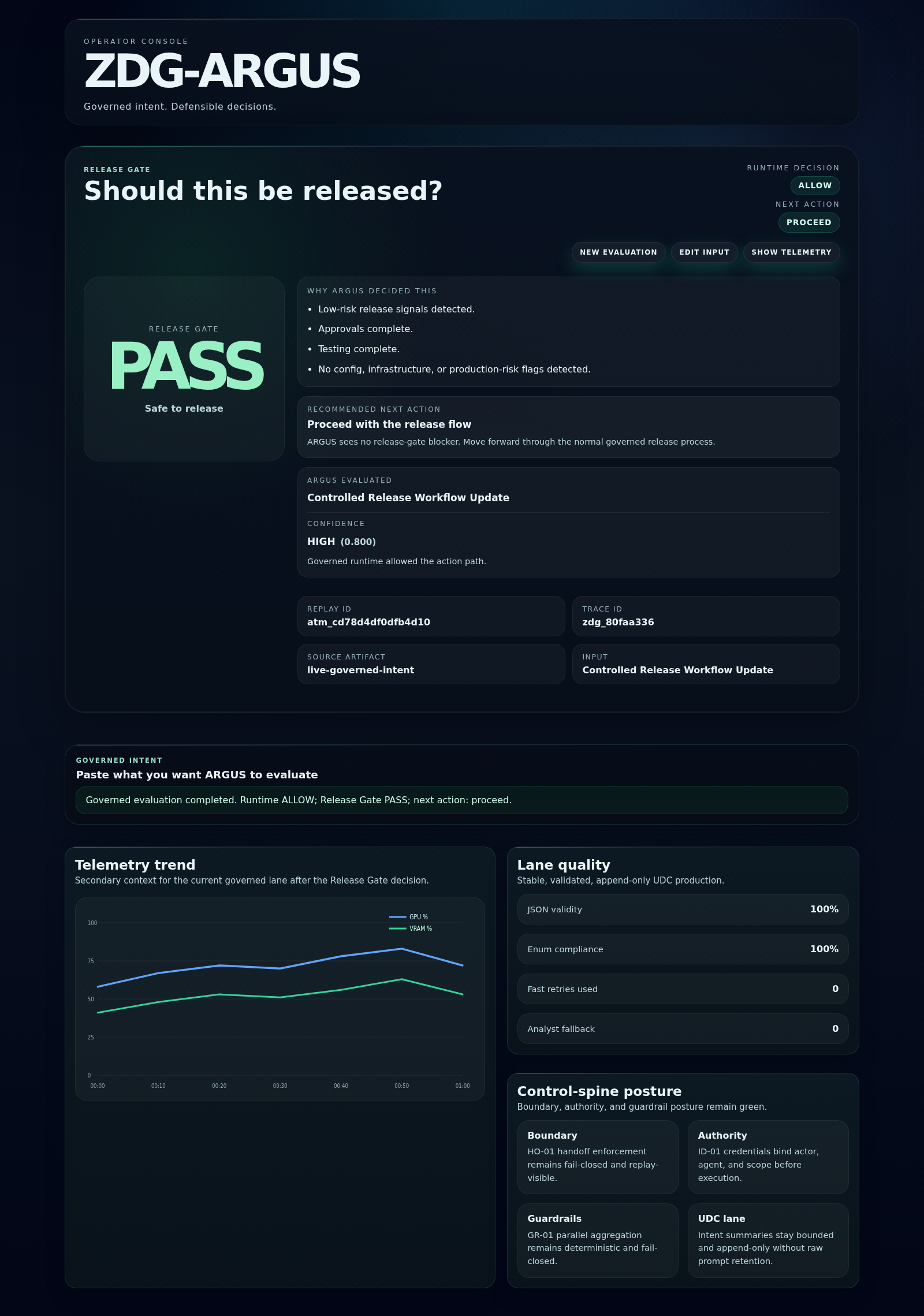

Every decision is recorded with the inputs, the policy version, and the outcome. Replay is possible. Reconstruction is not required.

What the system does reflects what the policy says — with the version active at decision time on the record.

Two commands. Verifiable output. No dashboard. No summary.

python -m core.zdg_control_center explain --task-id <id>Returns the decision record: inputs evaluated, policy version, outcome, approval status. One command, one auditable answer.

python -m core.zdg_control_center audit-integrityVerifies the evidence chain across all governed runs. No gap means no decision was made without a record.

Evaluates every agent action before it executes. Returns ALLOW, HOLD, or BLOCK. No action clears without it.

Records every governed event with replay fidelity. What happened, what was decided — all replayable, none reconstructed.

Surfaces behavioral signals — reasoning drift, escalation, deception — during the run. Not in the post-mortem.

Where human judgment is explicit and bound to execution. Approval is recorded, not implied by inaction.

For organizations deploying AI in consequential workflows — where decisions must be defended, approvals recorded, and evidence must survive scrutiny.

The Flight Recorder in developer form. Instrument your agent, capture governed runs, and produce verifiable output from the first deployment.

Governance for AI that acts should look like operational control — not retrospective explanation.

The scan identifies where your governance posture is absent or undefendable. The platform gives you the infrastructure to close those gaps.